Better Search Engine Optimization (SEO) can mean the difference between capturing high page rankings in Google, for relevant keywords, and remaining

Drupal SEO

"How good is Drupal for SEO?" The answer: great Drupal SEO is easy to implement with the right strategy, information and modules. However, Drupal makes it easy to shoot yourself in the proverbial SEO foot at the same time, so you have to be careful.

Fundamentally,

With the right information about how and where to tweak Drupal (along with an understanding that SEO is also about offsite factors, content, relationships, networking, and so on), you can have a lean, mean machine that outperforms and out-competes the competition.

This article will quickly explore the must-have Drupal SEO modules, and also look at some less well-known Drupal specific optimization issues that might help leapfrog your site to the front of the SERPs (Search Engine Results Pages).

Must have Drupal SEO modules

Drupal 6 and Drupal 7 have a number of great features built into the core. However, there are several modules that are vital to excellent Drupal performance:

Nodewords & Meta Tags

NodeWords (Drupal 6) and Meta Tag (Drupal 7) provide hugely important META SEO (off-page) content.

In particular, you are able to configure and automate the meta keywords and description tags, as well as specify a canonical URL for each page. Canonical URLs are extremely useful because they help the search engines to decide which version of a page is the most important one (in the even of duplicates).

Global Redirect

Global redirect is IMHO, one of the most important search optimization modules available. It provides a host of important functionality that helps to:

- reduce duplicated content

- 301 redirect to node alias path

- 301 redirect to front page based on current URL

Instead of having multiple URL paths that all serve the same page (Drupal's default behavior - i.e. node/123, node/123/, node/path-alias, node/path-alias/, node/PATH-ALIAS, etc), Global redirect ensures that all variants of the same content are redirected to one URL.

Path & Pathauto

Path is part of the Drupal core, and can be extended using Pathauto to automatically generate search engine and user friendly URL aliases.

Absolutely vital, and far more useful than the default node ID paths. More about how to use Path with Token a bit later.

Search 404

Search 404 is a useful little module that returns search data for URL paths that are not found. In effect, it provides keyword based content instead of a 404 error.

Boost

Boost is a must-have for any site that has predominantly anonymous traffic. Boost creates static cache pages and bypasses Drupal's processing for valid cached pages, improving your website page serving speed drastically.

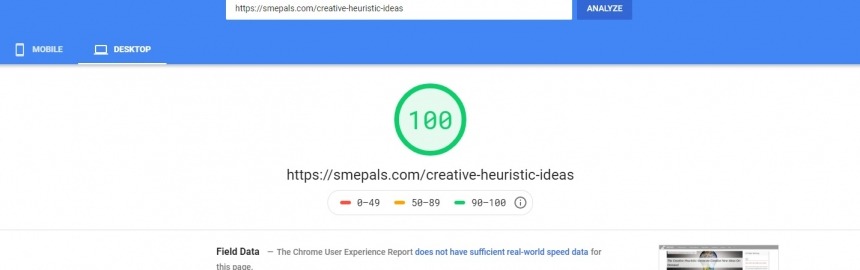

Since page load speed is an important part of ranking well in search, Boost is now crucial.

XML Sitemap

XML Sitemap allows you to easily create a sitemap that can uploaded into Google Webmaster tools, and other search engines, to help them better crawl your website.

This module can help get your content indexed much quicker.

Checklist

SEO Checklist provides a helpful and handy checklist of modules you should have installed, and activities you should perform to ensure that your site meets most best practices for search.

Search optimization tips & tricks

Not every aspect of SEO can be handled by a module. Sometimes, it's about how those modules can be used or combined into something that drives your pages up the search results. Let's take a look...

Tweaking Drupal paths

Did you know that you can use the Path module to automatically rewrite URL aliases using tokens (requires the Token module)?

This is hugely beneficial. For example, you might want to rewrite the standard "content/" path with a taxonomy term. This would place content tagged with "marketing" into a path that always includes that term (i.e. /marketing/marketing-article) - far more useful than "content".

.htaccess 301 redirects

It helps to know a bit about your .htaccess file. Usually the version you want to work on is found in the public folder (the root of your web directory). Make sure you set your server to redirect to either version of your domain (as shown here for SME Pals):

Try clicking on each version of the link. Both will end up at . This helps avoid duplicate content penalties because, from Google's perspective, these URL could be completely different sites.

Control panels with contexts

Panels has a habit of returning a page result for virtually any URL that is even remotely related to the its path. This is probably not the behaviour you want because it means that Google can happily make up thousands of fictitious URLs that panels serves up with a 200 OK response.

These pages then find their way into Google's index. Thousands of them. An infinite number of them. All exactly the same page. Obviously, after a while Google's going to look at the index and say, "hmmm, this site contains almost exclusively duplicate content; it's not a good quality site".

Make sure you set a path based selection rule that prevents panels from serving anything other than the specific URL you want.

Blocking stub pages with robots.txt

Google, and other search engines, don't like stub pages - pages that don't contain much content, if any.

You might not think you have any, but it's easy for Drupal to create plenty without your knowledge. For example, are people allowed to create user accounts on your site? If so, then each user has a user page. If they don't fill out their profile, most of those user pages will be indexed by Google as "stub pages.

Without knowing it, you could have hundreds of stub pages harming your PageRank. There are plenty of other ways to build up stub pages in Drupal. You have to be vigilant.

Writing content for Drupal

Assuming you have the NodeWords or Meta Tag modules installed, then you should always write your blog post or web page titles with keywords in mind.

In addition, since the first few sentences of any blog post will automatically be included as the page's meta description, which is often return as part of search results, you need it to be something that attracts readers attention, and includes important keywords.

Talking of writing content, here's a list of resources that will help you write for the Web and leverage that content to build relationships, generate buzz, and get to the top of Google's SERPs:

- Building backlinks the right way

- How to write a press release (

and actually get publicity) - How can I improve blogger outreach?

- Top 10 local SEO tips

- Influencer marketing 101

- How to blog

Canonical URLs vs. robots.txt

Whenever possible, use canonical URLs to indicate which page the search engines should rate the highest, instead of blocking duplicates. Google prefers to crawl all content so it knows more about your website. It understands there may be some innocent duplication, and, if it knows which is the canonical URL, it won't penalize you.

Disallow search engines access to paths and content that you know it should never need to crawl - like the user login page (you don't want the google spider to open an account, do you?).

Pass SEO juice with 301 redirects

If you decide to change the structure of your website in any way, be sure to use 301 redirects (in your .htaccess file) to redirect links from the old URLs to the new ones. This will help to prevent google from treating the newly structured content as if it were actually new.

It also helps to prevent referral traffic (that links to the old URLs) from being sent to '404, page not found' pages on your site.

Be wary of Google's Panda & Penguin algorithm updates

Basically, if what you're doing is not something natural in the course of researching, writing and sharing great content designed to help readers then you are starting to edge closer to the grey area between being fine and being penalized.

However, implementing everything mentioned in this article will set your business blog or website a long way down the right path to success. Here are a bunch of articles and resources that can give you more insight into these two feared webspam algos:

- How to undo bad search optimization

- Don't let a Google penalty destroy your business

- Top 5 reasons your site is losing Web traffic to Google's Panda

- How to recover from a drop in page rankings

My own organic search traffic has doubled nearly every quarter for the last 18 months - a strong indication that the strategies and policies that I implement (for myself and my clients) is both effective and acceptable to Google and the other search engines (i.e. Google's Panda and Penguin updates actually help my blog posts bubble to the top of the SERPs).

Feel free to share any additional hints, tips, and SEO tricks in the comments below.

Making sure your site enjoys the benefits of high quality, relevant backlinks is about more than creating great content and waiting for nature to take its co

Restoring Web traffic volumes after a Google algorithmic penalty (especially one like Panda or Penguin) can be a very difficult and, often, fruitles

About two or three months ago I decided to have a quick look at what sites Google consider related to mine using the related: search operator.

To my horror I saw a list of SEO agencies - some of which were no more than a landing page for a paid SEO service (of no doubt dubious quality). Why would Google think this blog is an SEO company?

SME Pals' tagline is 'Start a small business today', and the focus of the site is to inspire entrepreneurs to find a business idea and startup as quickly and easily as possible. Sure, search is a big part of growing an online business, so I talk about it... but is this enough to cause Google to think this blog is an SEO service?

SEO (Search Engine Optimization) for eCommerce sites plays a vital role in driving valuable organic search traffic that converts into sales and revenue.

Would you love to get completely irrelevant traffic from Google's organic search results? How about absolutely no traffic at all?

Use robots.txt and 301 redirects to "shape" your content into a Google search friendly high-traffic machine while avoiding common pitfalls

Really unique and clever business ideas don't come along all that often, and when they do I feel obliged to help them out with some free SEO advice.

I came across We Rent Goats today, and loved their eco-friendly idea of clearing weeds and brush with goats, instead of harmful herbicides.

The site opens with a cute sign of a goat carrying a sign around his neck - "will work for food". Nice touch.

Check out two of the best business SEO tools (Ahrefs vs.

Webmasters, bloggers and anyone else trying to make a living off the Internet, and in particular, Web traffic generated Google search, will understand how en

Implementing local SEO (Search Engine Optimization) techniques has become more and more important, from an Internet marketing perspective because, over time,

Google analytics provides a wealth of valuable SEO data. But are you using it to its full potential to help create better content, drive more traffic and convert it more effectively?

It often helps to mine Google analytics data for SEO intelligence with a specific business objective in mind. The analytics and SEO tips covered in this article are all techniques I use to help me decide what new content to create, and whether or not my content is making an impact.